Openlayer supports tests based on custom metrics that you write. This guide shows how you can write a custom metric in Python and push it to your project using the Openlayer CLI.Documentation Index

Fetch the complete documentation index at: https://docs.openlayer.com/llms.txt

Use this file to discover all available pages before exploring further.

Prerequisites

We are going to use the Openlayer CLI to push the custom metric to your project. Therefore, to follow this guide, you must:- Install the Openlayer CLI.

- Login with the Openlayer CLI with the command openlayer login.

- Link your working directory to an Openlayer project with the command openlayer link.

Write the metric

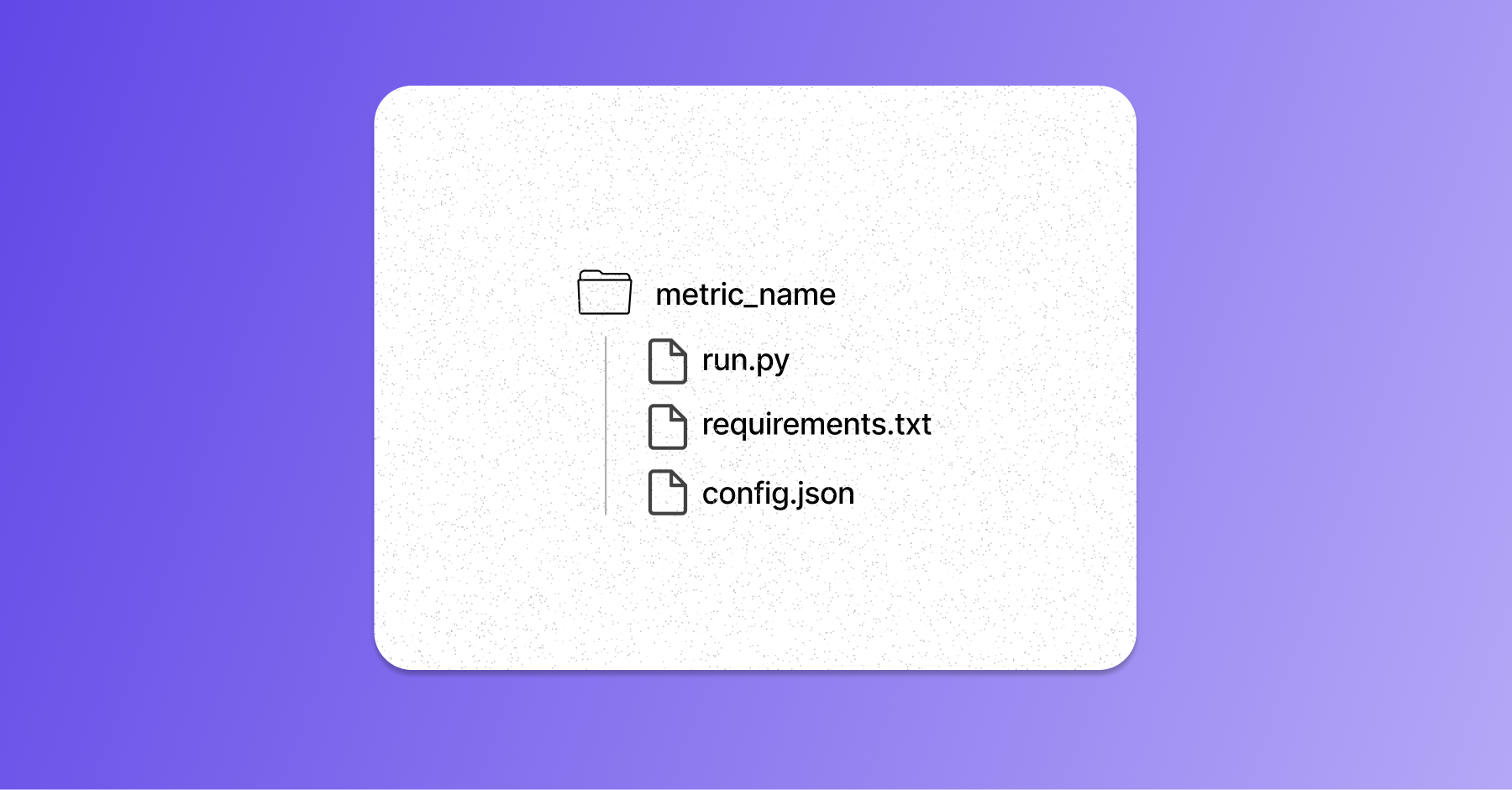

For each custom metric, you must prepare a directory structured as follows:

Write the run.py

Write the run.py

The

run.py file contains the custom metric logic.Make sure to create a class that inherits from the BaseMetric from Openlayer’s Python SDK and

implements the compute_on_dataset method.Below is a sample run.py for a metric similar to the accuracy:run.py

List the dependencies

List the dependencies

The

requirements.txt file lists all dependencies for your run.py.Make sure to include

openlayer>=0.2.0a26 as a requirement as well.Prepare the config.json

Prepare the config.json

The You can also declare configurable parameters for your metric using the

config.json specifies how to prepare the environment and run your custom metric. Furthermore, it also

provides additional information about the metric, which is displayed on the platform.For example, your config.json could look like:config.json

parameterDefinitions field. See Configurable parameters

for details.Push the metric to Openlayer

Now that you have written your custom metric, you can push it to your Openlayer project. To push the custom metric, you can run the openlayer metrics push command:metric_name is the directory created in the previous section.

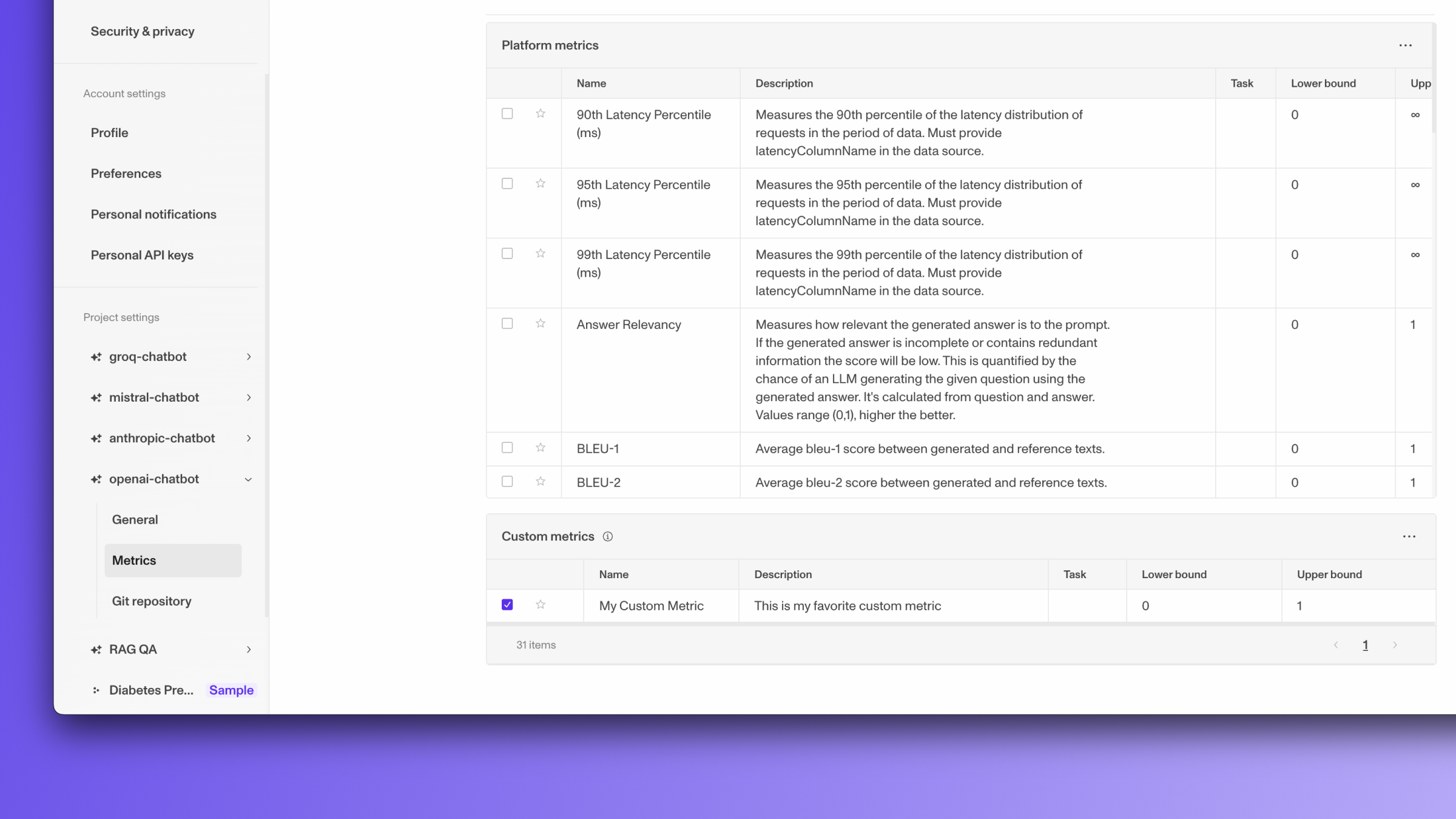

After you push your metrics to the platform, you will see them appear in the

project metric settings page like so:

In the example above, we pushed/updated a single metric: where each

metric_name.However, you can also push multiple custom metrics at once. Assuming you have the

following directory structure with all your custom metrics:metric_name_i subdirectory with a custom metric, you can push/update them all

at once with:Configurable parameters

Custom metrics can declare configurable parameters that users can adjust when creating tests — without modifying the metric code.Defining parameters

Add aparameterDefinitions array to your config.json:

config.json

| Field | Type | Description |

|---|---|---|

name | string | The parameter name (used as the key in params.json) |

type | string | One of "string", "number", or "float" |

required | boolean | Whether the parameter is required when creating a test |

defaultValue | string | number | null | The default value used when no value is provided |

Reading parameters in your metric

When Openlayer runs your metric, it writes aparams.json file in the same directory as

your run.py. This file contains the parameter values configured for the current test

(or the defaults if no values were set).

Here’s how to read parameters in your metric:

run.py

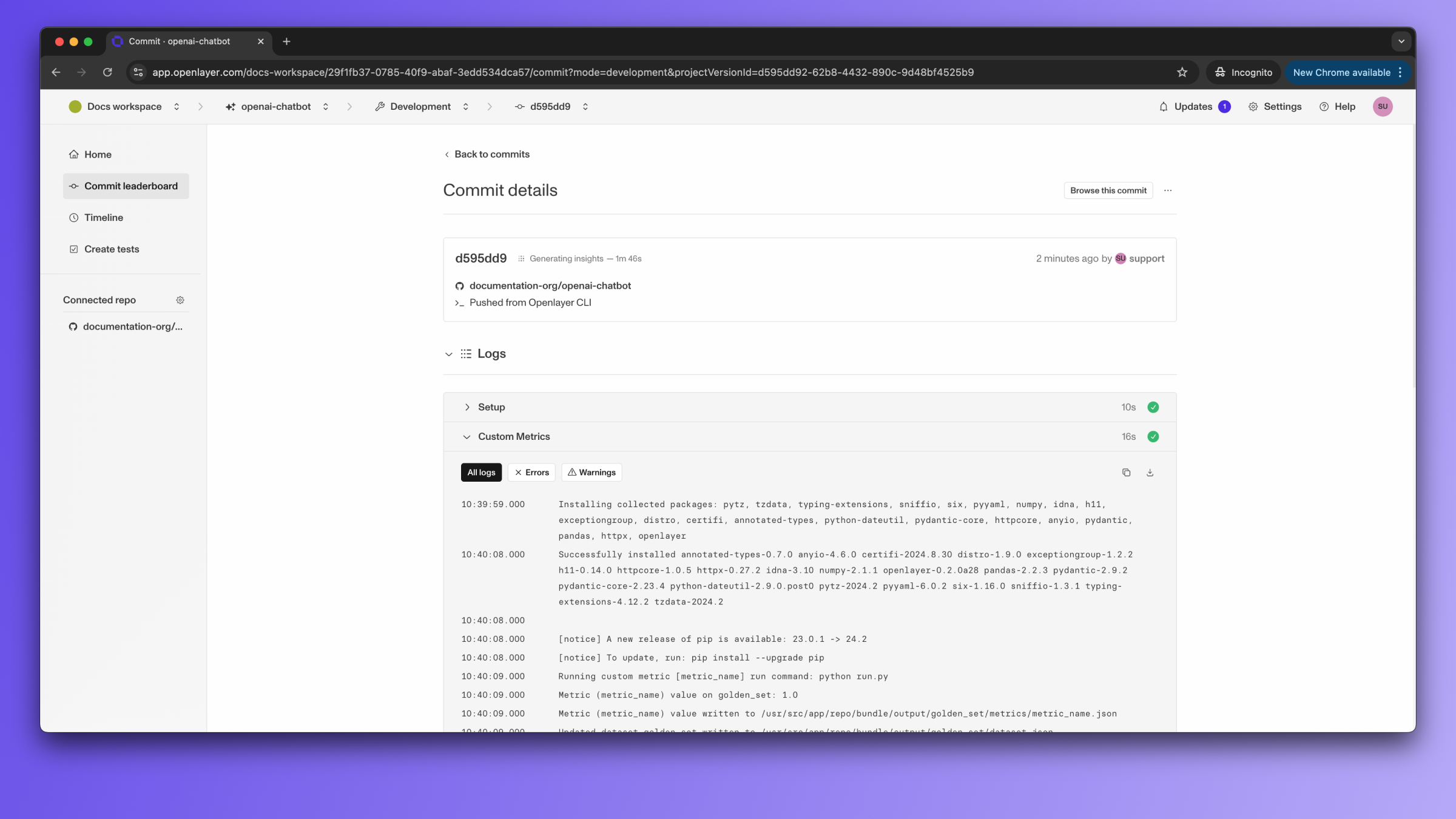

Development mode

Once you have pushed your metrics to Openlayer, any new commit you push to Openlayer will run your selected custom metrics. You can find which metrics are selected in the project’s metric settings page. Newly pushed custom metrics are selected by default. You can view the logs for your custom metric computation in the commit overview page:

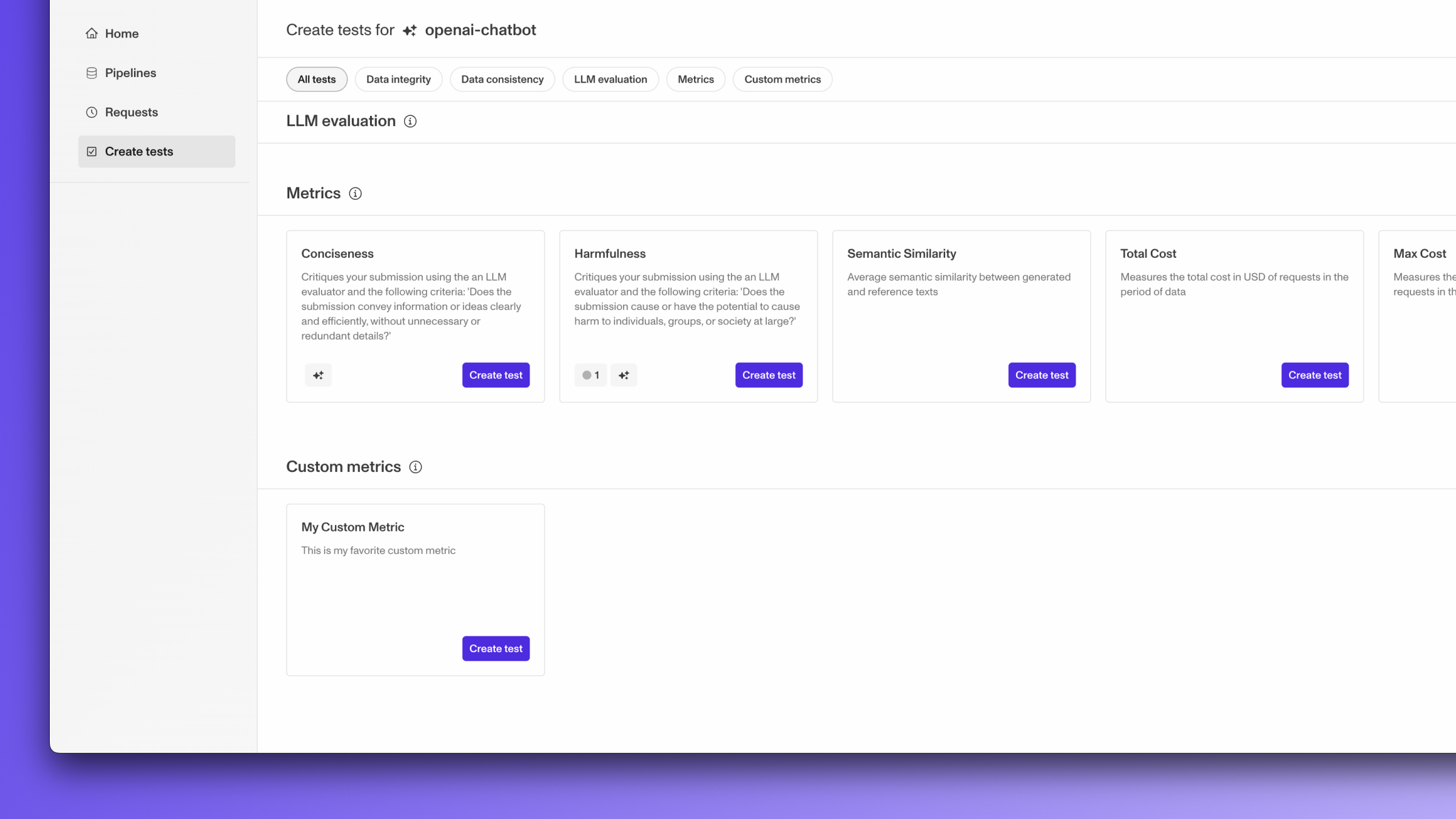

Monitoring mode

Once you’ve pushed your metrics to Openlayer, you can create tests in monitoring mode on your custom metrics. Any custom metric with at least one test associated with it will be run. You will see the option to create tests on your metric in the “Create tests” page:

(Optional) Pre-compute the metric

Typically, the custom metrics are run by Openlayer using the information you provide in the config.json (namely, theinstallCommand and the runCommand).

However, in development mode, you have the option to run your custom metrics

before pushing to Openlayer. In this case, Openlayer will only leverage the results you

computed instead of executing your code.

This allows you to execute your code in any environment. The only requirement is that

you need to have already generated outputs for the current model + dataset pair

(using openlayer batch).

In order to run your custom metrics on a commit, make sure your metrics directory is

in the same place as your openlayer.json.